For years companies have been buying reporting software, such as Cognos or Business Objects, hoping to solve their reporting challenges with a single purchase. Today companies are buying newer tools like Power BI and Tableau with similar expectations.

Despite the considerable power of these tools, most users are disappointed when they realize that software alone can’t solve the complex challenges of enterprise reporting. In the end, your level of business intelligence success or failure will depend less on what reporting tool you use and more on how you implement it.

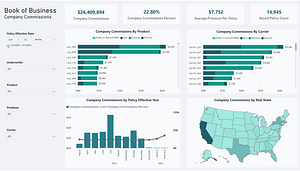

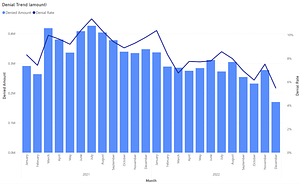

Reporting software should be used to explore data and create visualizations that effectively communicate the story the data is telling and reveal important insights. In most cases, reporting software is also the logical choice for report distribution and consumption, and to enable threshold-triggered and exception reporting and notifications.

Unfortunately, data coming out of transactional and back-office applications almost always requires some degree of modification before it can be used in reports. In its natural state, data is rarely “report-ready.” Reporting tools may include features that can assist with this work, but no tool of any kind can yet solve this problem entirely.

To be clear, it’s entirely possible to point your reporting software at a source system database, and you can do a remarkable amount of data transformation right in the tool. Often you can use it to integrate, cleanse and organize data in a way that makes report development easy for typical business users.

However, when taking this approach most companies have difficulty eliminating dependency on Excel. Individual departments and business analysts already have expertise in Excel. They typically also have historical data stored in Excel that may not be available anywhere else. Further, they probably have macros and formulas in spreadsheets that already perform significant data transformation. So, when given the option to leverage all of that, or try to recreate it starting from scratch in a reporting tool, many opt to leverage Excel as part of the overall solution. In fact, we’re told the most predominant data source feeding Tableau today is Excel.

Furthermore, this approach has difficulty or fails completely when it comes to multi-source data integration, solving data quality issues, creating derived data, persistent staging of historical data and usability for self-service analytics.

The solution to all of these challenges is to separate data preparation from data consumption. This entails developing a custom, purpose-built series of data preparation algorithms we like to refer to, collectively, as a “data solution” that prepares the data before it ever reaches Tableau or other reporting tools.

Data solutions can take many forms, from a persistent staging area to a dimensionally-modelled data warehouse and semantic layer. Regardless of the selected architecture, or the tools used to create it, data solutions all do one thing – they provide a central repository of report-ready data that can be consumed by any reporting tool, and is easily understood and manipulated by business users, regardless of what tool they use to access it.

By de-coupling data preparation from reporting, the centralized approach offers serious advantages. One obvious advantage is the ability to “build once and deploy many.” In other words, the algorithms and logic used for data preparation can be developed just once, with no duplication of effort across departments, and all parts of the organization can leverage the resulting data set.

Other benefits include higher data quality, higher data usability and greatly reduced data management overhead. And of course it allows you to finally move beyond the practice of preparing data in silos using spreadsheets. This yields the most important advantage afforded by centralized data preparation, a “single version of the truth.” With a single, governed data set, built and utilized by everyone, arguments about who’s data is “right” become a distant memory. This in turn facilitates company-wide cooperation around performance improvement in a way the siloed approach can’t.

Companies electing to develop a centralized data solution must be prepared to make additional investment of both time and money. Additionally, they must gain buy-in across the organization in order to succeed. Fortunately, this effort will be well repaid with the creation of a company-wide culture of fact-based decision making and performance optimization, and the success that comes with it.